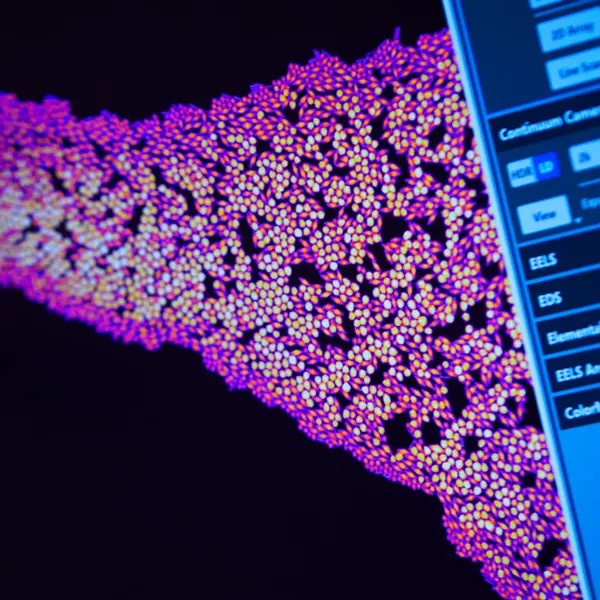

Stimulerade av vår tids behov och utmaningar är institutionens ambition att främja en kreativ miljö för forskning, lärande och samverkan. Vi tillför en konkurrensfördel genom att länka de bästa internationella forskarna i materialvetenskap, nanoteknologi och energiforskning med ledande industriella partners.

Vår forskning

Vår forskning bedrivs inom ramen för våra sex forskningsavdelningar.

Utbildning

Från kandidat- till forskarutbildning.

Samverkan

Vi samverkar med akademi, näringsliv och samhälle.

Examensarbeten

Tillgängliga examensarbeten

Möt våra forskare

I våra forskarporträtt möter du några av de framstående forskare som ingår i Göteborgs fysikergemenskap.

Våra forskningsprojekt och publikationer

I Chalmers forskningsdatabas, Research, hittar du våra forskares publikationer och forskningsprojekt.

Presentationer av examensarbeten

Se en lista över när aktuella examensarbeten vid fysik presenteras.